Small Language Models: The Hedgehog, The Fox, and the End of the "God Model"

Note: The history of technology swings between two poles: Centralization and Decentralization, AI has been stuck in an Imperial phase, worshipping trillion-parameter "God Models" like GPT-5. In 2026, the empire is fracturing. This article argues that the future belongs to the Small Language Model (SLM) ,a technological return to the specific, the local, and the sovereign.

In his famous 1953 essay, the philosopher Isaiah Berlin divided thinkers into two categories, borrowing a fragment from the Greek poet Archilochus:

"The fox knows many things, but the hedgehog knows one big thing."

The Fox is the generalist. He is fascinated by the infinite variety of the world. He pursues many ends, often unrelated and even contradictory. The Hedgehog is the specialist. He relates everything to a single, central vision. He ignores the noise to focus on one universal organizing principle.

For the past three years, Silicon Valley has been obsessed with building the Ultimate Fox. Models like GPT-5 and Gemini Ultra are designed to know everything. They are trained on the entire internet. They are "God Models," vast encyclopedias of human cognition centralized in server farms.

But as we settle into 2026, the strategy is shifting. We are realizing that while Foxes are impressive at dinner parties, Hedgehogs build the world.

We are entering the era of the Small Language Model (SLM). And this isn't just a technical optimization; it is a philosophical correction.

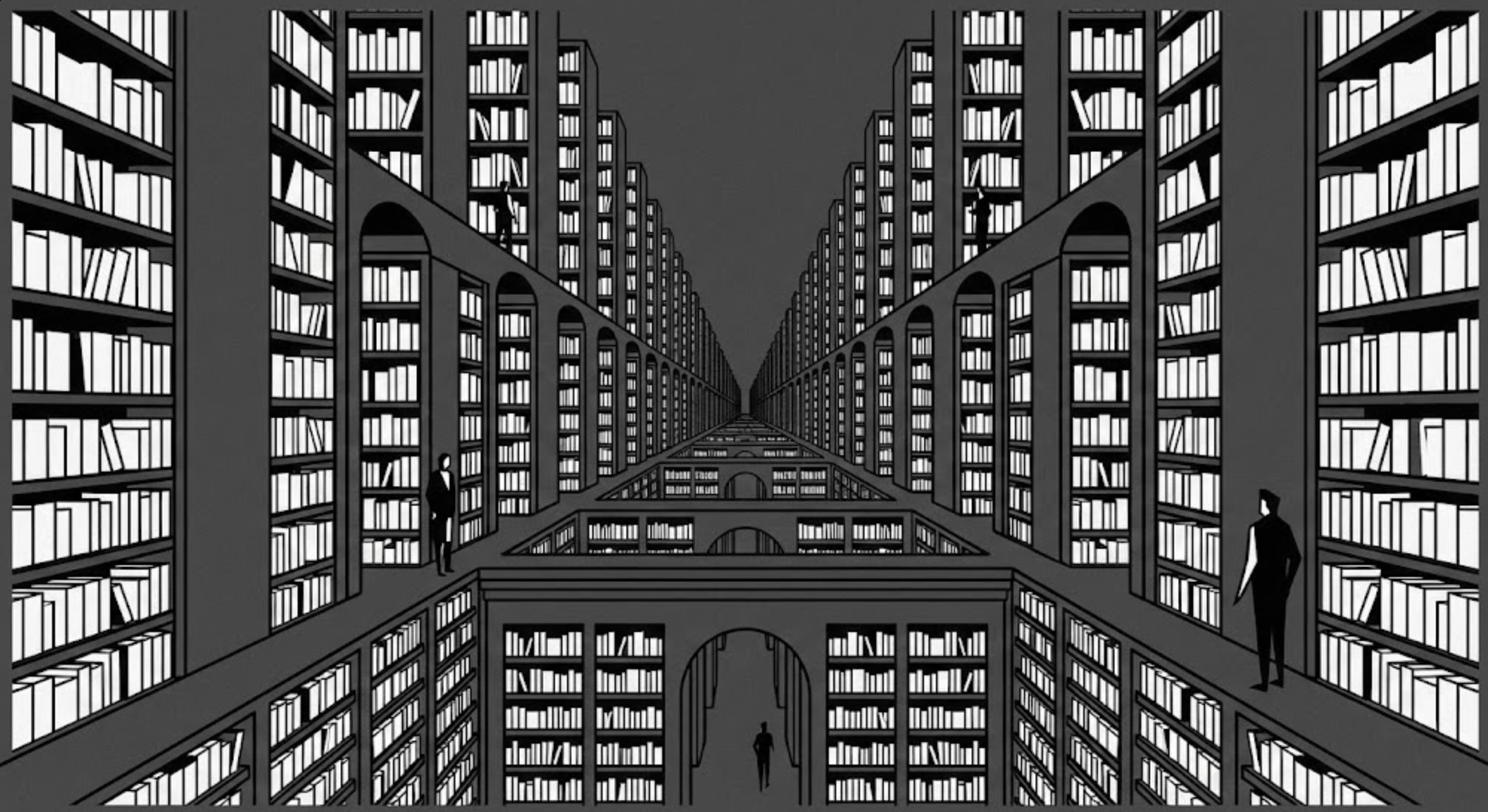

1. The Borgesian Map: Why "More" is Less

Jorge Luis Borges wrote a fable about an empire where the Cartographers became so obsessed with accuracy that they created a Map of the Empire whose size was that of the Empire. It coincided point for point with reality. It was perfect. It was also useless.

Giant LLMs are suffering from the Borges problem. By trying to contain the entirety of human knowledge, they become unwieldy. When you ask a trillion-parameter model to summarize a legal contract, you are activating a neural network that also "knows" how to write fan fiction and diagnose rare diseases. You are bringing a nuclear reactor to light a cigarette.

This leads to the Generalist Trap:

- The LLM (The Fox): "I can write a sonnet, code in C++, and explain quantum physics." (Jack of all trades, master of none).

- The SLM (The Hedgehog): "I do not know what poetry is. But I can look at a SQL database and write the perfect query, every single time, in 10 milliseconds."

2. The Persona Illusion: Why "Prompting" Isn't Enough

A common counter-argument arises here: "But GPT-5 has 'Personas.' I can just tell it to act like a SQL specialist."

This is the Persona Illusion. When you prompt a giant model to "be a specialist," you are merely putting a mask on a generalist.

- The Drift Problem: A mask can slip. Because the general knowledge is still there, a "Persona" can still be tricked (jailbroken) or accidentally hallucinate information from outside its domain.

- The Compute Problem: Under the hood, the model still loads its trillions of parameters. You are paying for a Ferrari to drive 10 mph.

An SLM doesn't need a mask, it is lobotomize. It is structurally incapable of drifting because it only knows the specific task.

3. The Hidden Tax: Fragility and Engineering

However, we must address the elephant in the room. If SLMs are so great, why isn't everyone using them? Because Hedgehogs are stubborn.

An LLM is "tolerant." You can give it a vague, poorly written prompt, and it will use its vast world knowledge to guess your intent. An SLM is "intolerant." If your prompt is unclear, or if the task requires "peripheral knowledge" (connecting two unrelated concepts outside its training data), the SLM will fail catastrophically.

This creates a new trade-off:

- With an LLM, you save on Engineering but pay for Compute.

- With an SLM, you save on Compute but pay for Engineering.

To make an SLM work, you cannot just "chat" with it. You need to build a rigorous pipeline, usually RAG (Retrieval Augmented Generation), to feed it the exact facts it needs. The SLM is not a brain; it is a processor. If you feed it garbage, it cannot "figure it out." It will hallucinate logic because it lacks the common sense to know it is confused.

4. From The Panopticon to The Polis

There is a political dimension to this architecture. The "God Model" era was one of High Modernism. It relied on massive centralization. To use intelligence, you had to send your data to the Oracle (the Cloud API). This creates a relationship of dependency. You are a serf on the digital estate of the model provider.

The SLM era represents a return to Sovereignty. Because SLMs (like Phi-4 or Mistral) run on a local device, they allow for Edge Intelligence.

- Privacy as a Right: Your data never leaves the laptop. The "brain" comes to the data, not the other way around.

- Tooling vs. Enframing: Heidegger warned that technology "enframes" us. A giant cloud API enframes us (we feed it). A local SLM is a tool in the classical sense (it serves us).

5. The Economics of "Small is Beautiful"

In 1973, the economist E.F. Schumacher published Small is Beautiful. He argued against "gigantism" in industry, advocating for "appropriate technology"—technology that is human-scale, cheap, and decentralized.

SLMs are the realization of Appropriate AI. Calling a massive model for every trivial task creates a "Tax on Curiosity." SLMs drive the marginal cost of intelligence to zero.

- Latency: An SLM running locally feels like an extension of your own thought process (instant).

- Cost: You stop paying rent to the cloud. You own the compute.

6. The New Architecture: The "Swarm of Experts"

If the Monolith is dead, what replaces it? The Society of Mind.

Marvin Minsky theorized that the human mind is not one single "self," but a collection of mindless agents that interact to create intelligence. The new enterprise architecture mirrors this. We are moving from a Monolithic architecture to a Router Architecture.

Imagine a "Router" that sits between the user and the models:

- User: "What is the capital of France?" -> Router: Sends to the 1B-parameter "Trivia Hedgehog" (Cost: Free).

- User: "Analyze this proprietary medical record." -> Router: Sends to the 7B-parameter "HIPAA Hedgehog" (local, secure).

- User: "Write a poem about the singularity." -> Router: Sends to the 1T-parameter "Creative Fox" (Cloud API).

You only pay for the Fox when you need the Fox. For everything else, you rely on the Hedgehogs.

7. Strategic Takeaway: Own the Weights

For the Founders and CTOs reading this: Stop building wrappers. If your strategy is "I call OpenAI's API," you do not have a company; you have a toll booth on someone else's road.

The SLM offers a path to genuine IP.

- The Moat is the Fine-Tuning. A generic Llama-8B model is a commodity. But a Llama-8B model that you fine-tuned on 10 years of your specific customer support logs? That is a proprietary asset.

- Own the Brain. When you fine-tune an SLM, you own the weights.

Sovereignty is the ultimate luxury. In a world of rented intelligence, the company that owns its own brain wins.

8. Conclusion: The Return to Techne

The Greeks had a word for craft-knowledge: Techne. It wasn't abstract theory; it was the hands-on knowledge of the carpenter.

The "God Models" promised us Episteme (Universal Knowledge). They promised to be the Oracle. But businesses don't need Oracles. They need Techne. They need tools that work, tools that are sharp, and tools that fit in the hand.

The era of "Magic AI" is ending. The awe is wearing off. We are entering the era of "Engineering AI." It is less romantic. It is harder work. It is smaller. And, as Schumacher would say, it is beautiful.

No spam, no sharing to third party. Only you and me.

Member discussion